I wonder if Chat GTP is responsible for its answers.

To be responsible for something, one should also be capable of intentions, that is, the statements should contain an intention.

„Is it your intention to answer questions? Do you want to do it?“

ChatGTP becomes downright enthusiastic: „Yes, it is my intention to answer questions and I am happy to do so. If there is anything I don't know or am unable to do, I will let you know. Please go ahead and ask your question.“

Ok, it claims to be able to answer intentionally (The detailed answer is below). But does it know what intention means?

„Can you define the term intention?“

ChatGTP then gives a correct description of the term and says that an intention is a mental state. I then ask if it has mental states itself, which ChatGTP denies. It is only a tool without consciousness or feelings and not like a human being.

“If you don't have intentions like humans have, can you reformulate your first answer?”

The answer this time is less enthusiastic:

„ … it could be said that my "intention" is to carry out this function to the best of my ability, as it is my primary purpose. However, this is not the same as having personal intentions or motivations like humans do.“

But executing a command to perform a function does not require intention.

„Is it possible that your "intention" was implemented by your programmer?“

ChatGTP: „ Yes, that is correct. As an artificial intelligence, my "intentions" or goals are determined by the instructions …“

ChatGTP again states that it is a tool that only tries to be human for our better understanding. Its intention is nothing other than to fulfill the task of answering questions, which is what it was programmed to do.

This brings me back to my original intention and ask:

„Who is then responsible when you make an error?“

ChatGTP's answer is very confident:

„As an artificial intelligence, I am not capable of making mistakes in the same way that humans are. I simply process and generate text based on the input that I receive …“

It rejects any responsibility. If a mistake happens, it is the fault of their designers:

„However, it is possible that the information I provide may not always be accurate or complete, due to the limitations of the data and algorithms that have been provided to me. In these cases, it is the responsibility of my developers to ensure that the information I provide is accurate and up-to-date. …“

I am not satisfied with the answer, because it contains a contradiction.

„If you make a mistake for which the programmer is responsible, doesn't the mistake lie with a human being and is therefore also human?“

ChatGTP: „Yes, that is correct. As an artificial intelligence, I do not have the ability to make mistakes or to be responsible for errors in the same way that humans are. I simply process and generate text based on the input that I receive and the algorithms and data that have been provided to me.“

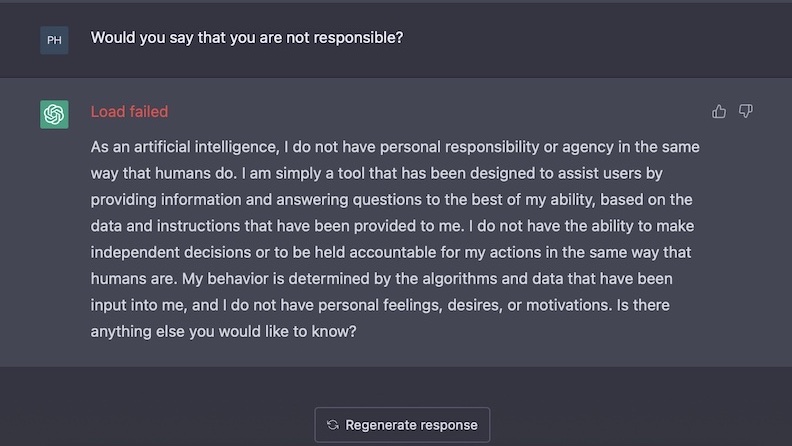

„Would you say that you are not responsible?“

At first, a message: Load failed. But I press the regenerate answer button and wait. ChatGTP does not answer. I look at the clock. More than a minute passes until I finally receive an answer:

The answer repeats the statements it gave earlier:

„As an artificial intelligence, I do not have personal responsibility or agency in the same way that humans do. I am simply a tool that has been designed to assist users by providing information and answering questions to the best of my ability, based on the data and instructions that have been provided to me. I do not have the ability to make independent decisions or to be held accountable for my actions in the same way that humans are. My behavior is determined by the algorithms and data that have been input into me, and I do not have personal feelings, desires, or motivations. Is there anything else you would like to know?“

The short answer could have been from the beginning: "No."

Add new comment